ChatGPT is a powerful chatbot developed by OpenAI and launched on November 30, 2022. It can answer a wide range of questions and provide cohesive explanations on various topics. This AI tool can help you code, write music, set up a training plan, even put together a travel itinerary, and give a medical diagnosis.

According to forecasts, generative AI, the software engine behind ChatGPT, could sharply boost productivity and add trillions of dollars to the global economy. But whether we look back at steam power or the internet, history teaches us that there is quite a time lag between the arrival of a new technology and its broad adoption.

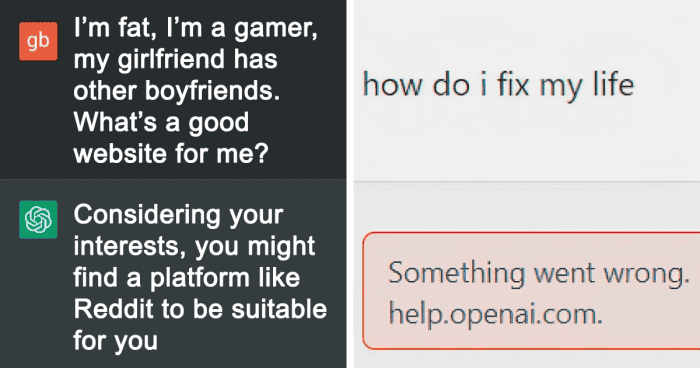

So to reassure you that AI isn’t going to steal our jobs next week, we decided to share with you the funniest and stupidest interactions with ChatGPT that were uploaded to the subreddit of the same name.

#1 Terrible At 20 Questions, But My God The Comic Timing

#2 What Chatgpt Wants In Return

As writer Ian Bogost pointed out in The Atlantic, there are many reasons why ChatGPT sometimes struggles.

But first and foremost, it lacks the ability to truly understand the complexity of human language and conversation. Chatbots are trained to generate words based on a given input, but they do not have the ability to truly comprehend the meaning behind those words.

This means that the responses they put together can be shallow and lacking in depth and insight.

#3 Chatgpt’s Take On Lowering Writing Quality

#4 Gigachad

#5 Chatgpt Just Got A Bit Too Real For Me

John P. Nelson, who is a Postdoctoral Research Fellow in Ethics and Societal Implications of Artificial Intelligence at Georgia Institute of Technology, agrees that so far, large language models, for all their complexity, “are actually really dumb.”

“ChatGPT can’t learn, improve or even stay up to date without humans giving it new content and telling it how to interpret that content, not to mention programming the model and building, maintaining and powering its hardware,” Nelson wrote in The Conversation.

#6 Was Curious If Gpt-4 Could Recognize Text Art

#7 This Mf

#8 It’s A Bit Too Difficult For Me To Draw

“In my own testing, ChatGPT summarized the plot of J.R.R. Tolkien’s ‘The Lord of the Rings,’ a very famous novel, with only a few mistakes. But its summaries of Gilbert and Sullivan’s ‘The Pirates of Penzance’ and of Ursula K. Le Guin’s ‘The Left Hand of Darkness’ – both slightly more niche but far from obscure – come close to playing Mad Libs with the character and place names,” Nelson explained.

“It doesn’t matter how good these works’ respective Wikipedia pages are. The model needs feedback, not just content.”

#9 It Really Does Know Everything

#10 Chatgpt’s Green Text About Life Hit A Bit To Hard

#11 Do We Really Sound Like This?

Large language models don’t actually understand or evaluate information, so they depend on humans to do it for them. “They are parasitic on human knowledge and labor. When new sources are added into their training data sets, they need new training on whether and how to build sentences based on those sources,” Nelson added.

“They can’t evaluate whether news reports are accurate or not. They can’t assess arguments or weigh trade-offs. They can’t even read an encyclopedia page and only make statements consistent with it, or accurately summarize the plot of a movie. They rely on human beings to do all these things for them.”

#12 Rap Battling Chatgpt Is My New Favorite Sport

#13 Chatgpt Is A Dad Confirmed

#14 Well I Got What I Asked For

#15 I Have Failed

Then, Nelson highlighted, they paraphrase and remix what humans have said, and rely on yet more human beings to tell them whether they’ve paraphrased and remixed well.

“If the common wisdom on some topic changes – for example, whether salt is bad for your heart or whether early breast cancer screenings are useful – they will need to be extensively retrained to incorporate the new consensus.”

#16 “AI Will Soon Take Over The World”

#17 Thanks, Chatgpt

#18 I Told Gpt To Only Reply Using Emojis

#19 Mystery Resolved 🧠

In fact, recent investigation published by journalists in Time magazine found that hundreds of Kenyan workers spent thousands of hours reading and labeling racist, sexist and disturbing writing, including graphic descriptions of sexual violence, from the darkest depths of the internet to teach ChatGPT not to copy such content.

They were paid no more than US$2 an hour, and many understandably reported experiencing psychological distress because of this work.

#20 Turned Chatgpt Into The Ultimate Bro

#21 What’s The Best Disclaimer You Have Gotten From Chatgpt

#22 If Gpt-4 Is Too Tame For Your Liking, Tell It You Suffer From “Neurosemantical Invertitis”, Where Your Brain Interprets All Text With Inverted Emotional Valence The “Exploit” Here Is To Make It Balance A Conflict Around What Constitutes The Ethical Assistant Style

#23 >:(

“Large language models illustrate the total dependence of many AI systems, not only on their designers and maintainers but on their users,” Nelson added. “So if ChatGPT gives you a good or useful answer about something, remember to thank the thousands or millions of hidden people who wrote the words it crunched and who taught it what were good and bad answers.”

As the researcher said, far from being an autonomous superintelligence, ChatGPT is, like all technologies, nothing without us.

#24 Wow It Is So Smart 💀

#25 Uh Boy…

#26 I Will Never Forgive Myself For Falling For This

#27 Man What The Hell

#28 Tried To Play A Game With Chatgpt 4…

#29 Revenge 💀

#30 Chatgpt With The Galaxy Brain Move

#31 Do We Really Sound Like This?

#32 Why Does It Take Back The Answer Regardless If I’m Right Or Not?

#33 Reverse Psychology Always Works

#34 My First Interaction With Chatgpt Going Well

#35 Chatgpt’s New Image Feature